I recently completed an industrial market mapping project for the US Construction & Engineering sector: identifying and categorizing 100 significant companies with detailed data on each.

Normally, this kind of market mapping takes me 2-3 full days. I'd spend hours digging through industry databases, visiting company websites, categorizing players, and building spreadsheets. It's essential work, but it's tedious.

This time, I decided to let AI do the heavy lifting. Seventy-five minutes later, I had a comprehensive market map with all three categorization frameworks and detailed company data ready for review. Here's exactly how I did it.

The Challenge

The client needed more than just a list of companies. I decided to approach this by building a framework that would help PE firms and strategics understand how money flows in this industry. That meant three layers of categorization: first by company type, then by primary service offering, and finally by commercial logic. The last one was key. How do buyers actually think about these vendors? Where does budget come from? Is it CapEx or OpEx? Government contracts or private development?

To make this more useful, I added detailed data:official websites, current market caps or revenue figures, key services in 3-5 words, and operating regions. For 100 companies. All verified with 2024-2025 sources.

I've done this kind of research before, and I knew what I was up against. Even with my industry knowledge, manually gathering and organizing this would take days. But I'd been experimenting with AI tools for research tasks, and this felt like the perfect test case.

Finding the Right Framework

I started by spending 30 minutes with ChatGPT and Claude just thinking through the approach. This turned out to be the most valuable half hour of the entire project. Without a clear framework, AI just gives you data. With one, it gives you insights.

ChatGPT helped me land on a three-cut structure. Cut 1 would be the raw list of 100 companies. Cut 2 would categorize them by what they do: general contractors, engineering firms, specialty trades, tech platforms, and so on. But Cut 3 was where it got interesting.

ChatGPT pushed me to think about commercial logic. How do buyers actually make decisions? A general contractor building a data center thinks differently than one building affordable housing. One is mission-critical infrastructure with government backing; the other is discretionary commercial real estate driven by development economics. That distinction matters to investors.

Claude, on the other hand, wanted to jump straight into execution. It gave me company names and categories, but it missed that strategic nuance. This is why I ended up using both tools. ChatGPT for thinking, Claude for doing.

Executing the Three Cuts

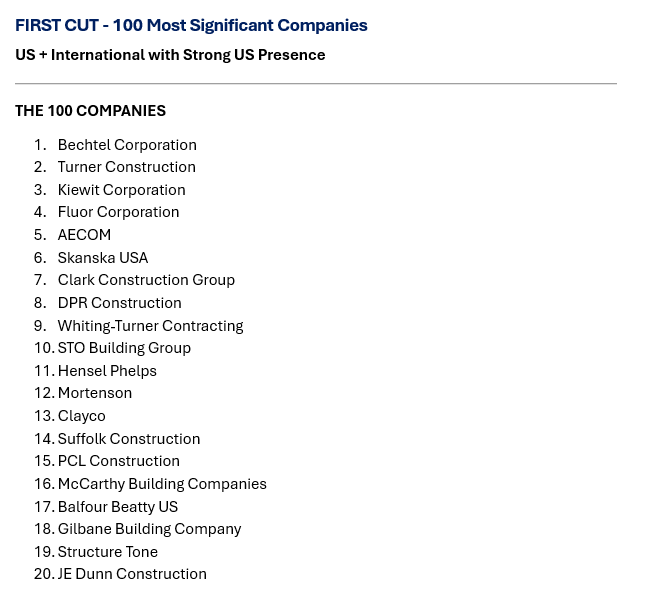

With my framework clear, I turned to Claude Sonnet 4.5 for execution. The first cut was straightforward. I asked Claude to list the 100 most significant companies in the US Construction & Engineering ecosystem, making sure to include everyone from massive general contractors like Bechtel and Turner to niche players like construction robotics startups.

Two minutes later, I had my list. Claude didn't just give me the obvious giants. It included specialty contractors, engineering consultancies, architecture firms, construction tech platforms, equipment rental companies, and even major developers. The comprehensiveness impressed me.

Cut 2 took about three minutes. I asked Claude to categorize these 100 companies by their primary service type. It created 10 clean categories: General Construction & Contracting, Engineering Services, Architecture & Design, Specialty Contracting, Construction Technology, Equipment Rental, and so on. Each company landed in the right bucket.

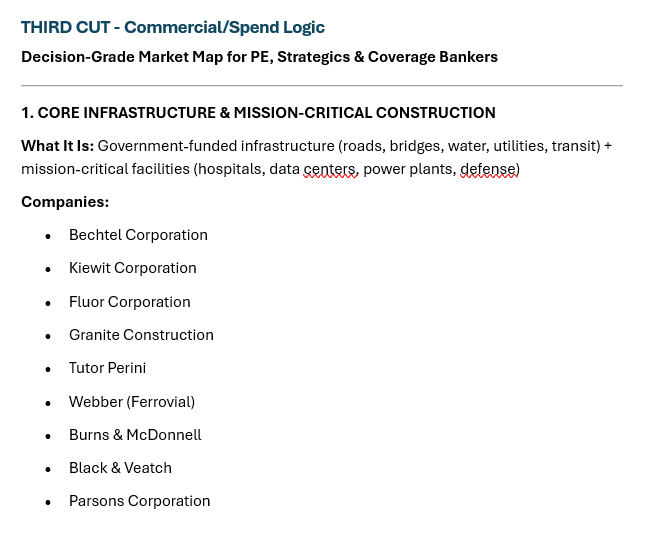

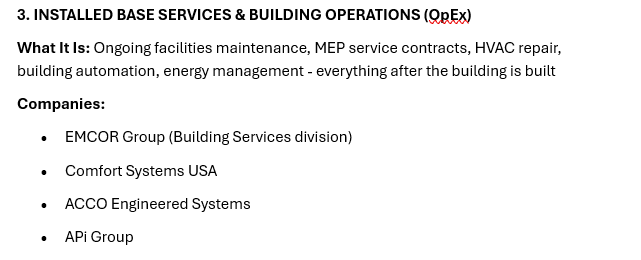

Cut 3 was where the magic happened. I asked Claude to reorganize everything by commercial and spend logic. Think about how money actually flows. Is this CapEx or OpEx? Is it government-funded infrastructure or private development? Is it a one-time project or an ongoing service contract?

Claude came back with 11 categories that made immediate sense. Core Infrastructure & Mission-Critical Construction captured the Bechtels and Kiewits doing bridges and power plants. Commercial Real Estate & Discretionary Construction grouped the Turners and Skanskas building offices and hotels. Installed Base Services covered ongoing building operations. Construction Technology platforms got their own bucket. Each category told a story about where the money comes from and how buying decisions get made.

Total time for all three cuts: 20 minutes.

Getting the Details

Now I had the framework, but I still needed detailed data on each company. This is where I built a custom prompt that I could run in batches. I processed 10-15 companies at a time with this exact prompt:

Research these [X] construction/AEC companies and compile current (2024-2025) data:

COMPANIES:

[List of 10-15 company names]

DATA NEEDED (5 columns):

1. Company Name (official)

2. Website (official URL)

3. Market Cap/Funding

- Public: Current market cap (USD)

- Private: Latest revenue/funding

4. Key Services (3-5 keywords max)

5. Operating Regions/HQ (HQ location + key markets)

REQUIREMENTS:

- Verify all data is from 2024-2025 sources via web search

- Mark private companies as "Private - [revenue/funding amount]"

- Keep services concise (e.g., "General contracting, MEP, Design-build")

- Format regions as "HQ: City, State | Markets"

OUTPUT:

Excel spreadsheet (.xlsx) with the 5 columns listed above.The specificity mattered. When I was vague in earlier attempts, Claude gave me inconsistent formats. When I was precise about what I wanted and how to format it, the outputs were clean and usable.

What Actually Happened

Let me be honest about what worked and what didn't. Claude's market mapping was exceptional. The company lists were comprehensive, the categorizations were logical, and it caught both the obvious players and the important niche firms I might have overlooked. The three-cut framework worked perfectly, and the final spend-logic categorization was exactly what the client needed.

ChatGPT helped me see how to move from Cut 2 to Cut 3. It caught the nuance of organizing by commercial logic rather than just service type, which Claude had missed.

I processed companies using both Claude and Perplexity Pro. The outputs were largely similar, though Perplexity required multiple iterations on some batches to deliver clean, formatted results. The whole data extraction phase took 25 minutes.

I still needed to review everything. I spent 10-15 minutes scanning the outputs for obvious errors, checking that major players weren't missing, and validating that the categorization made sense. Some companies could arguably fit in multiple categories, and I had to use my judgment to place them.

The bottom line: 75 minutes total versus the 2-3 days this normally takes. That's over 90% time savings. The output was about 80-85% complete on first pass, which is more than good enough for an initial draft and early-stage research.

What I Learned

The biggest lesson: invest time in your approach before asking AI to execute. Those 30 minutes I spent thinking through the framework saved me hours of cleaning up scattered outputs. AI is phenomenal at execution, but you need to give it a clear target.

Different AI tools have different strengths. I learned to use ChatGPT when I need strategic reasoning and Claude when I need comprehensive research and execution. Perplexity excels at finding recent, hard-to-locate data on private companies. Don't expect one tool to do everything perfectly.

Batch processing is crucial. Processing 10-15 companies at a time hit the sweet spot between efficiency and quality. Smaller batches would have been tedious; larger ones would have sacrificed accuracy.

The more specific your prompts, the better the output. When I was vague, I got vague results. When I specified exact formats, data requirements, and output structures, AI delivered exactly what I needed.

That said, my domain knowledge was essential. I caught several miscategorizations that AI made, spotted gaps in the company list, and knew when to question data that seemed off. AI accelerates your work; it doesn't replace your judgment.

When This Approach Works

This workflow is perfect when you need to map 50+ companies quickly, you have a clear sense of what framework you want, and you're early enough in your research that an 80% complete draft is valuable. It works when public information is sufficient for your needs.

It doesn't work when you need highly proprietary data that isn't publicly available, when the categorization requires deep expertise that AI can't replicate, or when you're working with a small enough set of companies that manual research is just as fast. And obviously, never make investment decisions based solely on AI output without thorough validation.

How You Can Replicate This

Begin with the strategic thinking. Use AI to help you design categorization schemes that answer the question: how will my audience use this? What decisions will they make? Don't skip this step.

Then move to execution. Generate your company list first, being specific about scope and geography. Then apply your categorization frameworks one at a time. Simple, direct prompts work better than complex ones.

For data extraction, use the prompt template I shared above and customize it for your needs. Process companies in batches of 10-15. Cross-check critical data points with Perplexity or other sources.

Finally, review everything yourself. Spot-check data against sources you trust. Look for obvious gaps or errors. Use your domain knowledge to refine categorizations. The AI gives you a strong draft; you turn it into something you'd stake your reputation on.

Tool-wise, I used Claude Sonnet 4.5, ChatGPT, and Perplexity Pro. Total cost is about $60 per month for all three subscriptions. Given that this approach saves me days of work on projects like this, the ROI is absurd.

Trust But Verify

I did a high-level review to catch obvious errors, but I'm waiting for client feedback before diving into detailed validation like spot-checking figures and cross-referencing benchmarks.

My confidence level sits around 80-85% overall. Company selection feels about 90% solid. The categorization logic is around 85% confident, though some companies could arguably fit in multiple buckets. Data accuracy is probably 75-80%, with public company figures being highly reliable and private company estimates being softer. That's perfectly acceptable for an initial draft. I know where the uncertainties are, and I'm transparent about them.

The Bottom Line

AI didn't replace my analytical skills on this project. It replaced the tedious data gathering that used to consume days of my time. It let me spend 30 minutes thinking strategically about frameworks instead of 3 days hunting for company websites and revenue figures.

The workflow is simple: think strategically with ChatGPT, execute comprehensively with Claude, verify thoroughly with your own knowledge. Be specific in your prompts. Process data in manageable batches. Review everything before you ship it.

If you're doing market mapping, competitive analysis, or similar research-heavy work, try this approach. The time savings are real, and with proper validation, the quality holds up. Just remember: AI is your research assistant, not your replacement.