1. The Context Setting

The Task

I was tasked with identifying private PET recycling companies for a client conducting market due diligence in the circular economy/rPET space. The specific requirements were detailed:

Who: Company names and ownership structures

Where: Geographic locations (headquarters, facilities)

What: Input materials (types of PET waste they accept) and output products (recycled pellets, rPET flakes, fiber, etc.)

When: Company establishment dates and operational history

Business Model: Pure recyclers vs. integrated manufacturers

The deliverable was a comprehensive database with company profiles-essentially a complete market map of the private PET recycling industry.

Why AI?

The challenge with private companies is they don't appear in standard databases. Information is fragmented across trade publications, obscure industry websites, and regional directories. Manually, this would take 3-4 days or perhaps a week of searching, cross-referencing, and verification. I knew AI could systematically search these scattered sources, but the key would be instructing it properly.

My Starting Point

I'm somewhere between novice and intermediate with AI tools-actively exploring how to efficiently integrate AI into research workflows. I've learned that the most critical factor isn't just using AI, but spending time upfront to craft the right instruction (prompt).

2. The Approach

Tools Used

Claude (Extended Thinking, Sonnet 4.5): For prompt creation and strategic approach

Perplexity Pro (Deep Research enabled): For executing the research task

The Process: Creating the Prompt

This wasn't a quick "throw a question at AI" situation. I invested 5-10 minutes thinking through my approach before touching any AI tool.

Step 1: I shared the task with Claude, provided context about what I needed and why, then asked it to think deeply about the best approach and share alternatives.

Step 2: Claude asked follow-up questions about scope, data quality requirements, and deliverable format. I answered these to narrow the approach.

Step 3: Once we aligned on the strategy, I instructed Claude to create a concise, 100% accurate prompt optimized for quality output per market research standards.

The Actual Prompt

Here's the complete prompt I used in Perplexity:

PROMPT: PET Recycling Company Research

OBJECTIVE: Identify and profile private companies globally that buy, recycle, or use PET plastic waste. Create a comprehensive master list with verified data.

SCOPE:

Target: Private PET recyclers, PET waste buyers, rPET users

Geography: Global focus - North America, Europe, Asia-Pacific, Latin America, MEA

Exclude: Public companies, government facilities, general plastic recyclers without PET focus

RESEARCH APPROACH:

- Search industry associations (NAPCOR, APR, PRE) and recycling directories

- Review trade publications (Recycling Today, Plastics News) from last 2-3 years

- Identify companies through supply chain research (PET waste suppliers → their buyers)

- Target geographic recycling hubs (California, Netherlands, China, India, etc.)

- Use targeted searches: "[Region] PET recycling companies private", "rPET manufacturers [Country]"

DATA TO COLLECT: For each company, gather:

{

"company_name": "",

"country": "", "city": "", "region": "",

"year_established": "",

"company_type": "PET Recycler / Waste Buyer / rPET User",

"input_materials": ["post-consumer bottles", "PET flakes", etc.],

"end_products": ["rPET pellets", "rPET fiber", etc.],

"processing_capacity": "tons/year if available",

"website": "",

"data_quality": "High/Medium/Low",

"sources": ["URLs"]

}

DELIVERABLES:

- CSV file with all companies

- Summary table (CSV) with key fields: Company | Country | Type | Est. Year | Inputs | Outputs

- Market overview (500 words): regional breakdown, key clusters, trends, data quality assessment

- Research gaps document: missing data, challenges, recommendations

QUALITY TARGETS:

- Minimum 50 companies (aim for 75-100+)

- Cover at least 4 major regions

- Cross-verify information from 2+ sources

- Flag uncertain data as "Approx." or "Unconfirmed"

- Confirm companies are currently operational

SEARCH KEYWORDS: "PET recycling company", "rPET manufacturer", "bottle-to-bottle recycling", "PET flake supplier", "chemical recycling PET", "recycled polyester"

BEGIN RESEARCHData Inputs

In the prompt itself, I specified:

Geographic scope (global with regional priorities)

Target end-users (private companies only, operational facilities)

3. How It Played Out

What Worked Exceptionally Well

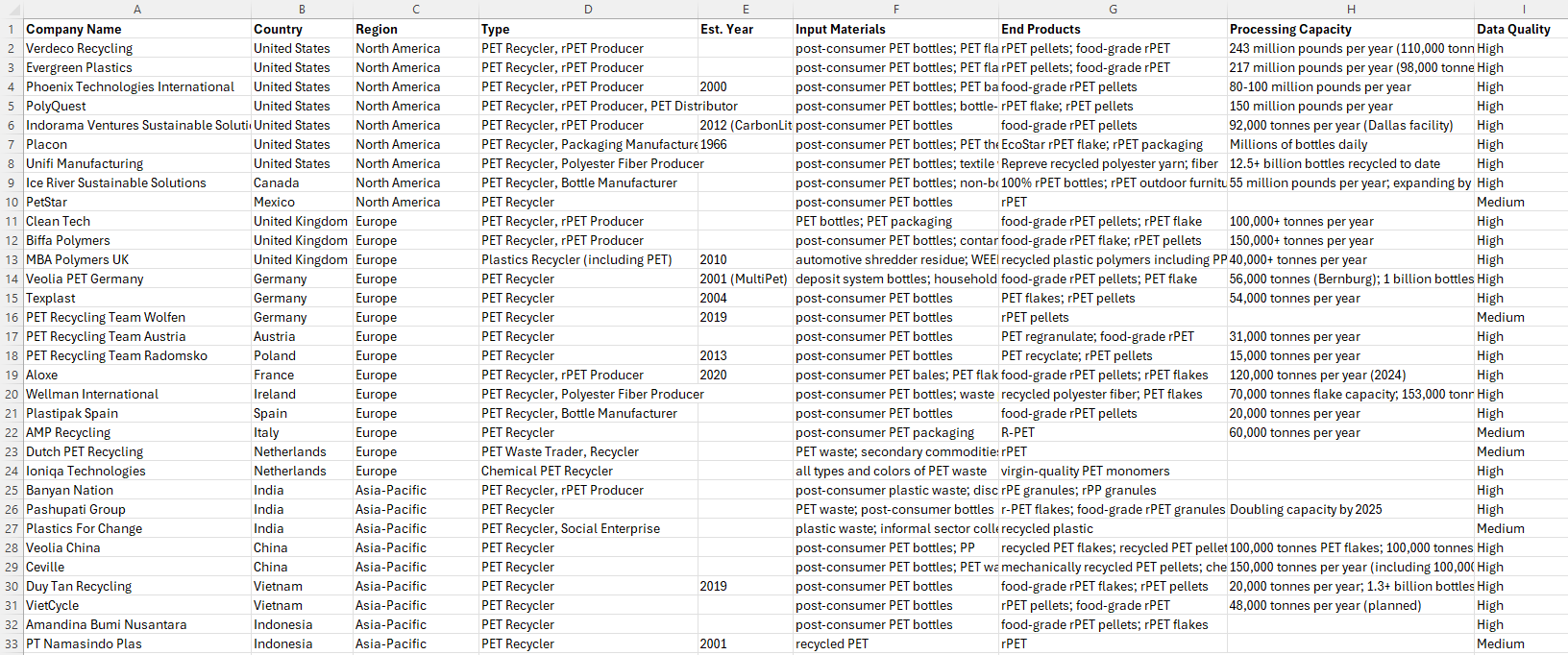

The output from Perplexity was impressive on the first run. No iterations needed. I received: The deliverables include profiles for 55 private companies with all requested data points, a master CSV list with complete company profiles, a summary table for quick scanning of key fields, a market overview covering regional breakdowns, industry clusters, and trends, and research notes documenting data gaps and confidence levels.

Here's the snapshot of the Master list given by Perplexity -

Time Saved: What would have taken perhaps a week manually took 45 minutes of AI-powered research, plus 20 minutes of verification.

The Numbers

Traditional approach: 3-4 days or a week

AI approach: 45 minutes research + 20 minutes verification = ~65 minutes total

Time efficiency: 98%+ reduction in research time

Initial output quality: 20 companies from the 55 were directly client-ready after my filtering

Quality Assessment

The AI didn't just scrape random websites. It pulled from credible sources: industry associations, trade publications, and company websites. The structured format meant I could immediately work with the data rather than spending hours organizing it.

4. What I'd Do Differently

Key Learning: The 15-Minute Investment

The reason I got such high-quality output in one run was the time I spent creating the prompt. You don't need to be a prompt engineering expert-you need clarity on what you want.

What made any prompt effective:

Role assignment (implied: act as a senior research analyst)

Clear exclusions (no public companies, no general recyclers)

Specific output format (JSON structure, CSV deliverables)

Quality instructions ("verified sources", "cross-verify from 2+ sources", "confirm operational status")

Directive keywords ("comprehensive", "systematic", "market research standards")

When to Use This Approach

Ideal for:

This work is best suited for mapping fragmented industries with scattered data, researching private companies where databases don’t have enough depth, building market landscapes that require 50+ data points, and supporting time-sensitive due diligence projects.

Not ideal for:

It’s not suited for deep financial analysis that depends on proprietary data, real-time regulatory compliance checks, or tasks that require nuanced human judgment on qualitative factors.

Skill Requirements

You need sufficient domain expertise to identify credible industry sources, detect outdated or incorrect information, judge which companies truly matter to the scope, and recognize what high-quality deliverables should look like.

AI amplifies your knowledge-it doesn't replace it.

5. Your Turn: How to Do This

Replicable Template

You can use my exact prompt structure if your task has similar requirements (company identification, market mapping, industry profiling).

To customize for your industry:

Replace "PET recycling" with your target industry

Adjust data fields in the prompt (keep what's relevant, add industry-specific fields)

Update industry associations and publications to match your sector

Modify geographic scope as needed

Change keywords to industry-specific search terms

My Recommended Workflow

Spend 5-10 minutes thinking through your approach before touching AI

Use Claude to pressure-test your approach and co-create the prompt

Execute in Perplexity (or similar research-focused AI)

Always verify the output before delivering to clients

Tool Recommendations

Prompt Creation: Claude (Extended Thinking on, Sonnet 4.5 model)

Research Execution: Perplexity Pro (Deep Research enabled)

Note: I'm not endorsing paid tools, just sharing what I used. There may be free alternatives depending on your needs.

6. The Validation Layer

How I Verified

After getting the AI output, I spent 20 minutes on verification:

Filtered for relevance: Narrowed 55 companies to 20 most relevant for client needs

Source checking: Verified that cited sources were active and accurate

Data accuracy: Spot-checked company details (location, products, operational status)

Cross-reference: For key companies, confirmed information across multiple sources

The Reality Check

Did AI give me 100% client ready output? No-and that's fine.

AI is not here to replace analysts. The analyst's role is critical for:

Validating AI results against ground truth

Filtering for relevance and quality

Drawing insights from the data

Understanding nuance that AI might miss

The AI handled most of the heavy lifting-data gathering, organization, and initial synthesis-while I focused on strategic filtering, verification, and final quality assurance.

Confidence Level

For investment decisions or client deliverables, I would use this AI-assisted approach with confidence, provided the verification step is never skipped. The prompt's built-in quality controls (cross-verification, source documentation, data quality flags) make the output trustworthy as a starting point, not an endpoint.

Final Thought

The real power of AI in research isn't just speed-it's the ability to systematically cover ground that would be impractical manually. But the quality of your output is directly tied to the quality of your input. Invest 15 minutes in crafting a solid prompt, and you'll save weeks of work.

Next post: I'll dive into another financial analysis workflow using AI. Stay tuned.