I spent an afternoon testing whether AI could handle something every equity analyst dreads: spreading historical revenue data and building revenue projections from scratch. The task? Extract Tesla's revenue by segment from their 2024 annual report, identify the key drivers, and set up a 5-year projection model.

Spoiler: The results surprised me-both good and bad.

The Setup

The Task: I needed to spread Tesla's revenue and revenue drivers with projections through 2029 for a financial model. This meant:

Pulling historical revenue by segment from the 10-K and investor presentation

Identifying key revenue drivers (unit volumes, ASP, capacity metrics)

Calculating growth rates

Building a projection framework with driver-based assumptions

Why I Tried AI: Excel AI tools have been marketing themselves as analyst replacements. This seemed like the perfect test case-not trivial, but not impossibly complex either. If they could nail this, maybe the hype has some truth to it.

My Background: I'm an advanced user, but I approached this like an intermediate analyst would. No fancy prompt engineering tricks-just straightforward instructions.

The AI Workflow

Tools I Tested:

Claude Opus 4.5 (Pro account)

Claude Sonnet 4.5

Try Shortcut (free version)

Tracelight (free version)

The Process:

I started by crafting a detailed prompt with Claude Sonnet 4.5's help (took about 2 minutes). Then I ran the same workflow across all four tools to compare.

Prompt 1 - Historical Data Extraction:

Extract Tesla's annual revenue data and key revenue drivers from the 2024 annual report and investor presentation, then organize it in Excel for financial modeling. Include total revenue and revenue by segment for all available years in the document, identify and extract the primary revenue drivers (such as unit volumes, pricing, or capacity metrics) that Tesla reports, calculate year-over-year growth rates, and structure the spreadsheet with historical data ready for forward projections.

I uploaded Tesla's full 2024 annual report and investor presentation to each tool and ran this prompt.Prompt 2 - Projection Setup:

Set up or update 5-year revenue projections (2025-2029) in the Excel model by creating an Assumptions tab with growth rates and drivers (such as unit growth, pricing changes, market penetration, or capacity expansion), then build revenue forecasts by segment using these driver assumptions with clear formulas linking assumptions to revenue outputs.Without reviewing the first output, I immediately ran this second prompt in the same chat.

Shortcut (Free version) came out on top and Claude Opus 4.5 a close second. Tracelight was functional but rough around the edges. Sonnet 4.5 disappointed.

Figure 1

Figure 2: Opus created multiple sheet which in my opinion was not needed and not asked for in my prompt

Figure 3: Snapshot of Output from Tryshortcut which I found to be very clean, formatted and institutional grade

What Actually Worked

The Winners: Claude Opus 4.5 came out on top, with Try Shortcut (free version!) a close second. Tracelight was functional but rough around the edges. Sonnet 4.5 disappointed.

The Good Stuff:

Historical data extraction: All tools correctly pulled ~90% of the relevant historical data from the 10-K and investor presentation. A task that normally takes an hour of manual work was done in 15 minutes max.

Structure: Try Shortcut delivered the cleanest output-two tabs (Assumptions and Projections), well-organized, institutional-grade formatting. Claude Opus matched the quality but created unnecessary additional tabs.

Formulas: Try Shortcut and Claude Opus built proper linking formulas between assumptions and revenue forecasts. Tracelight did this correctly too, just with messier presentation.

Speed: Even with a full annual report and investor deck uploaded, the tools processed and delivered in minutes.

I used Try Shortcut's free version while Claude was on Pro, I suspect the paid version of Shortcut might match or exceed Opus.

What Didn’t Worked

Missing Granularity:

Here's the big one-none of the tools caught that Tesla reports vehicle deliveries under operating lease accounting. These vehicles were treated identically to vehicles sold, which they're not. This is exactly the kind of nuance that separates a decent model from an institutional-grade one.

Claude Opus 4.5 Issues:

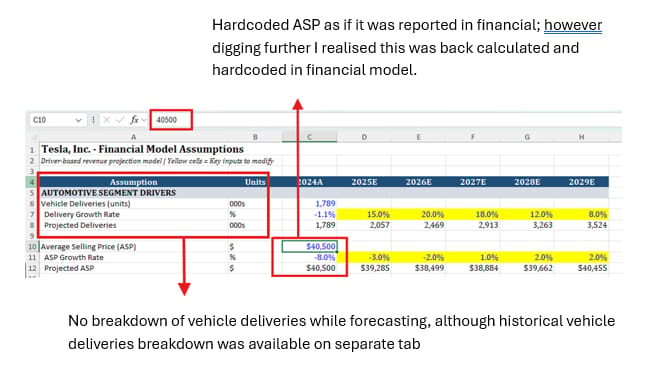

Hardcoded 2024 ASP: Opus calculated and hardcoded the 2024 average selling price as if it was in the 10-K, but it wasn't actually disclosed. It back-calculated from revenue and units, then treated it as a given. That's problematic.

Unnecessary complexity: Created multiple tabs when two would suffice. I didn't specify this in my prompt, but Try Shortcut intuited the right structure.

Lost granularity: Projected revenue for all vehicles together rather than by model. Try Shortcut and Tracelight maintained model-level detail.

Try Shortcut Issues:

Period labeling errors appeared after Prompt 1, but interestingly, these self-corrected when I ran Prompt 2 for projections. Not a major issue, but worth noting.

Tracelight Issues:

The modeling formulas were accurate, but the presentation wasn't institutional grade. An analyst would need significant time reformatting before sharing this with anyone. Good as a backup tool or for personal use, but needs polish.

Claude Sonnet 4.5 Issues:

Everything was hardcoded. No formulas. Just static numbers. Would require follow-up prompts to fix, but when other tools get it right the first time, why bother?

5. Key Learnings

The Honest Truth:

These tools can't replace analysts-and to be fair, they don't really claim to (the marketing hype is another story). But the amount of work they can handle, even with simple prompts, is genuinely impressive.

What I'd Do Differently:

Feed specific pages: Instead of uploading the entire 10-K, point the AI to specific sections. This might help with the granularity issues.

One task at a time: Breaking Prompt 1 and 2 into separate sessions with review in between would catch issues earlier.

Start with the best tools: Based on this test, I'd go straight to Try Shortcut or Claude Opus for financial modeling tasks.

Set formatting expectations: Adding "create two tabs: Assumptions and Projections" to the prompt would help control output structure.

When to Use vs. Not Use:

✅ Use these tools for:

Historical data extraction and organization

Setting up initial projection frameworks

Simple to intermediate complexity revenue models

Companies with straightforward revenue drivers

❌ Don't rely on them for:

Complicated multi-segment models without review

Catching accounting nuances (operating leases, revenue recognition complexities)

Final deliverables without analyst validation

High-stakes work where granularity matters

Critical Requirements:

Analyst review is non-negotiable. Period. You need someone who understands the business and the financials to validate formulas, check for missing nuances, and ensure the logic makes sense. The tools give you a 70-80% head start, not a finished product.

Practical Takeaways

Replicable Template:

This exact workflow should work for spreading revenue drivers at most companies. Here's how to adapt it:

For straightforward revenue models:

Use my Prompt 1 and 2 verbatim

Upload the latest 10-K and investor presentation

Start with Try Shortcut (free) or Claude Opus (paid)

Budget 30 minutes including review time

For complicated revenue models:

Break it down by segment-do one revenue stream at a time

Or specify the exact pages/sections you want the AI to focus on

Example modification: "Focus on pages 45-52 of the 10-K which discuss Automotive revenue"

Tool Recommendations:

Try Shortcut (free): Start here. Excellent formatting, good accuracy, fast. Upgrade to paid if you need it regularly.

Claude Opus 4.5 (Pro, ~$20/month): If you're already paying for Claude Pro, this is your go-to for financial modeling. Strong on complex analysis.

Tracelight (free): Good backup option. Accurate formulas but needs formatting work.

Claude Sonnet 4.5: Skip for Excel-heavy financial modeling. Better suited for other tasks.

The Validation Process

Here's my non-negotiable checklist after getting AI output:

Step 1: Historical Accuracy

Cross-check every historical number against the 10-K

Verify period labels (FY vs. CY, Q1 vs. Q4)

Check for accounting quirks the AI missed (leases, deferred revenue, etc.)

Step 2: Formula Logic

Trace assumption cells to projection cells

Verify growth rates are calculated correctly

Look for hardcoded numbers that should be formulas

Step 3: Business Logic

Do the projections make sense given the company's guidance?

Are the drivers reasonable? (e.g., don't project 50% unit growth when capacity only grows 10%)

Check for missing segments or revenue streams

Time Required: About 15-20 minutes of careful review for a model like this.

Confidence Level:

After review and corrections, I'd be comfortable using this as the foundation for an actual investment model. It's a solid starting point that saves significant time. But the keyword is "foundation"-you're still building the upper floors yourself.

Bottom Line:

The right AI tool with sharp prompting can be a genuine force multiplier for analysts. But it amplifies your skills, it doesn't replace them. An experienced analyst using Try Shortcut or Claude Opus just became 2-3x more productive. A junior analyst without proper review? They just created a model full of hidden landmines.

The tools work. Just don't skip the validation.